You launch a new program. Teachers are trained. Students log in. Implementation looks strong.

Six months later, outcomes are pretty good. Most students are above the benchmark.

It feels like success. Then someone asks, “What were students’ starting points?”

That question is where rigorous education program evaluation begins.

Baseline data is the foundation that determines whether your impact claims hold up in a renewal meeting, a budget review, a procurement conversation, or the moment a superintendent asks, “Are we sure this caused the change?”

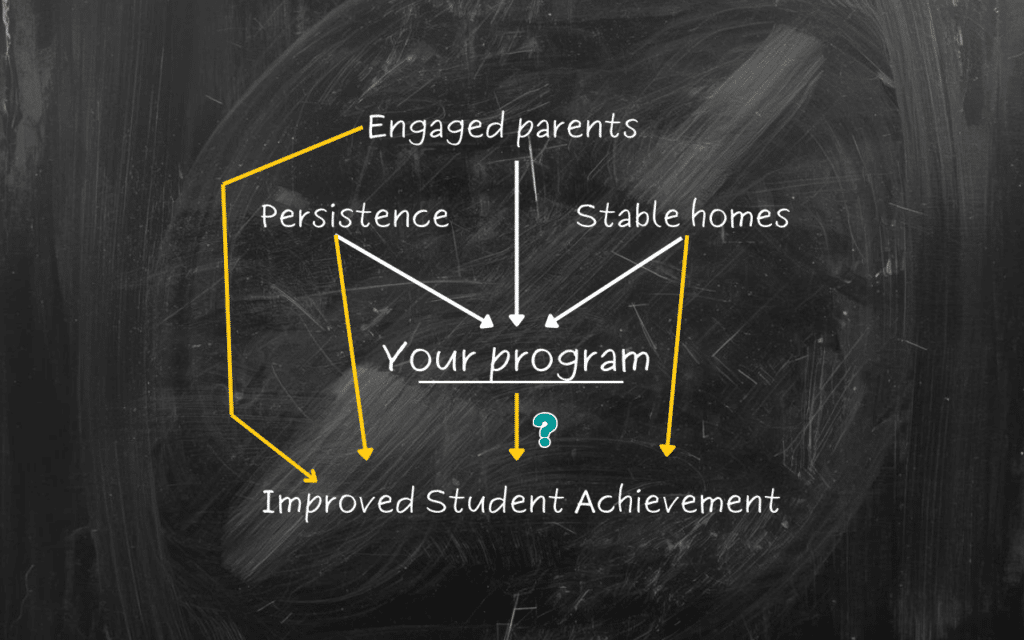

In K–12 education, improvement is always happening. Students mature. Teachers refine practice. Schools adopt new materials. District priorities shift. External supports come and go. Even when nothing “new” is introduced, year-over-year gains often appear simply because students grow and systems adjust.

So the problem is rarely whether outcomes improved. The real problem is whether your program deserves credit for that improvement.

What Is Baseline Data in Education Program Evaluation?

Baseline data refers to measures collected before a program, product, or intervention begins. It is the snapshot of where students were at the start, before the new input entered the system.

In practice, baseline data may include:

- Prior achievement scores or benchmark assessments

- Attendance rates and chronic absenteeism status

- Behavioral referrals, suspensions, and incident counts

- Course grades and credit accumulation

- Graduation risk indicators

- English learner growth measures (where relevant)

- Special education service markers (where relevant)

- EdTech usage patterns before structured rollout

Baseline data answers a basic question: where were students before the program started? Without that reference point, any post-implementation result floats without context.

A reading proficiency rate rising from 62% to 70% can look meaningful. But if comparable students in the same district typically rise from 60% to 69% over the same period, the program may be adding far less value than the headline suggests.

Baseline data creates the anchor that makes comparisons fair.

It also forces clarity about what you are actually trying to change. A program designed to reduce absenteeism should not be judged primarily by test score changes. A behavior intervention should not live or die by graduation outcomes alone. Baseline metrics help align evaluation to intent.

Strong educational impact evaluations don’t need to track everything. Instead, they should select baseline measures that match the program’s theory of change and the district’s decision needs.

Why Baseline Data Is Essential for Causal Inference

Consider a common scenario. Students using an EdTech tool outperform non-users at the end of the year. That sounds promising. But if those users began the year above grade level, their higher-end-of-year scores may reflect where they started, not what the tool changed.

Or consider a nonprofit mentoring program. Participants show improved attendance. But if participants were selected because they already had stable routines or stronger adult support, the program’s contribution can be overstated.

Baseline data makes it possible to adjust for pre-existing differences.

This shift is the difference between correlation and defensible impact estimation.

It’s also why baseline data has to be chosen thoughtfully. A baseline measure that is unstable, inconsistently collected, or only loosely tied to the program’s goals can weaken conclusions.

Baseline Data Reduces False Positives in EdTech Impact Evaluation

In edtech impact evaluation, one of the most common shortcuts is comparing high-usage students to low-usage students and treating the difference as impact. But usage is not random.

Students who engage more often may be more motivated. They may have stronger supports at home. Teachers may encourage higher-performing students to use the tool more. Some schools may implement with greater fidelity. In other words, usage patterns often reflect differences that existed before the tool was ever introduced.

When baseline achievement and other pre-intervention variables are incorporated into analysis, the apparent “usage effect” often shrinks or disappears. That doesn’t mean the tool has no value. It means the original comparison was confounded.

Baseline data enables evaluators to compare similar students: those with comparable starting achievement, attendance histories, demographic profiles, and behavior patterns. This strengthens edtech evidence of effectiveness and protects against inflated claims that collapse under external review.

Baseline Data and Nonprofit Program Evaluation

For nonprofit leaders seeking program evaluation services, baseline data plays the same role: it turns improvement stories into defensible evidence.

Funders increasingly require results that show measurable impact beyond anecdotal success. In many cases they are not just asking for youth outcomes, but whether outcomes improved because of the program.

If a tutoring program improves math scores, baseline data allows evaluators to test whether:

- participants improved relative to similar non-participants

- growth accelerated beyond expected trends

- effects were stronger for specific subgroups

- results were consistent across schools or concentrated in certain contexts

That precision strengthens donor reporting impact metrics and increases competitiveness for grant funding.

It also supports stronger partnerships with districts. District leaders do not have time to debate every methodological choice, but they understand fairness. They understand “compared to what?” Baseline data helps answer that question clearly.

When a reviewer asks, “How do you know this wasn’t regression to the mean?” or “How do you know these students wouldn’t have improved anyway?” baseline data becomes part of the response.

Baseline Data and Comparison Groups

Baseline data becomes most powerful when paired with well-constructed comparison groups.

Randomized controlled trials are often unrealistic in K–12 settings. Ethical concerns arise when withholding supports. Scheduling constraints limit flexibility. Program eligibility is often determined by need, not randomness. Implementation is driven by operational realities long before evaluators are invited into the conversation.

In the absence of randomization, quasi-experimental designs can use baseline data to create comparable treatment and comparison groups. This commonly involves matching students based on:

- prior achievement

- demographics

- attendance patterns

- behavioral history

- school context and grade level

- other relevant pre-intervention indicators

The goal is to reduce bias enough to make conclusions meaningful.

Baseline data is what makes “comparison” more than a label. Without baseline data, post-implementation differences may reflect pre-existing disparities rather than program effects.

Common Mistakes With Baseline Data That Undermine Evaluation

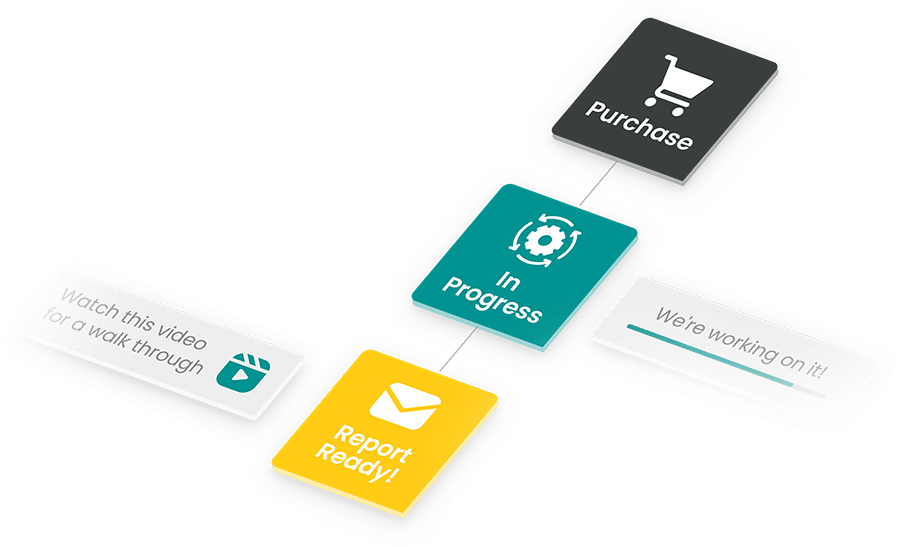

Issues with baseline data are common, but they’re also completely avoidable, as you can see:

- Collecting baseline data after implementation begins. Early exposure contaminates the starting measure. Once students begin receiving the intervention, the “baseline” is no longer truly pre-intervention.

- Using inconsistent metrics pre- and post-intervention. If instruments change, comparability suffers. Small measurement shifts can create false signals.

- Ignoring subgroup baseline variation. Aggregate baseline metrics can mask meaningful differences by grade level, school, or student group. A program can look neutral overall while producing strong effects for one subgroup and weak effects for another.

- Overloading on new data collection. Many districts already have the necessary baseline metrics in existing systems. Creating new surveys or measures can increase burden without improving rigor.

Effective program evaluation prioritizes high quality, relevant baseline measures drawn from existing data sources and aligned to decision needs. Baseline data should feel useful, not exhausting.

Baseline Data as Strategic Infrastructure

For school districts, baseline data supports confident renewal and discontinuation decisions. It clarifies what adds value above the status quo, what is redundant, and what produces gains only in certain settings.

For EdTech companies, baseline data strengthens competitive differentiation and reduces churn risk by showing measurable value relative to starting conditions. It also supports product development. If effects vary by baseline achievement, that informs onboarding, teacher supports, and implementation design.

For nonprofits, baseline data strengthens impact reporting and guides scaling decisions. Programs that work best in certain contexts can expand intentionally, rather than broadly and blindly.

Across the ecosystem, baseline data turns evaluation from compliance into a growth tool. It enables practical questions: which programs accelerate growth most effectively, for which students, under what conditions, and relative to what alternative?

Those are strategy questions. They are also the questions decision-makers are already asking.

Setting Your Team Up for Success

Baseline data requires intentionality at the beginning. It involves:

- defining outcome metrics clearly

- identifying existing data sources

- aligning timeframes

- planning for fair comparisons

- confirming data quality and completeness

When these pieces are set early, evaluation becomes smoother, faster, and more credible. The alternative is reconstructing baselines retroactively, which creates uncertainty, debate, and weaker confidence in findings.

In education, momentum matters. Decisions move quickly.

Baseline data makes sure that when those decisions arrive, you are not relying on surface-level trends or hopeful interpretation. You are relying on structured, defensible evidence grounded in where students began.