You review the report and feel cautiously optimistic. Scores are up. Attendance looks better. Behavioral incidents are trending down. By every surface-level indicator, the program seems to be working.

Then someone asks an important, unavoidable question: “How do we know this wouldn’t have happened anyway?”

That question is why control groups exist. And it’s why so many education impact conversations quietly fall apart when they reach the decision-making stage.

In K–12 education, improvement is the default. Students grow. Teachers refine practice. Districts layer initiatives on top of one another. Year-over-year gains happen even when nothing new is introduced. The problem is not whether students improved but whether a specific program deserves credit for that improvement.

That distinction matters more than most people realize, especially when purchasing decisions, renewals, grant funding, and public accountability are on the line.

Control groups are not an academic obsession. They are a practical safeguard against false confidence. They are how education program evaluation moves from storytelling to defensible decision-making.

This article is about what control groups actually do, why they matter in real school systems, and how to think about them without falling into the trap of waiting years for “perfect” studies that arrive too late to matter.

Why Control Groups Matter in Education Evaluation

Most education programs have students who show improvement over time. That’s not a controversial statement. In fact, it’s one of the biggest reasons education program evaluation is so difficult.

When outcomes improve, it’s tempting to assume the newest initiative caused the change. After all, the timing lines up. Scores were down. The program launched. Scores went up. End of story. Right?

Except it rarely is.

Students mature. Teachers adjust instruction. Schools adopt new materials. District priorities shift. External supports come and go. All of these forces influence outcomes simultaneously. When you look only at pre-to-post gains, you’re observing the impact of everything that happened to students, not isolating the effect of any one intervention.

This is where many well-intentioned evaluations go wrong. They mistake correlation for impact. They credit programs for growth that may have occurred anyway.

That mistake isn’t harmless. It might not appear right away, but it shows up later, when decisions carry consequences.

District leaders must justify purchases during budget season. EdTech companies need evidence that survives procurement scrutiny. Nonprofits rely on impact data to secure grants and donor funding. In those moments, “scores went up” is not enough.

Control groups exist to answer the harder question: compared to what?

They are not about academic purity. They are about protecting decision-makers from overclaiming impact and making investments they cannot defend later.

What is a Control Group in Education?

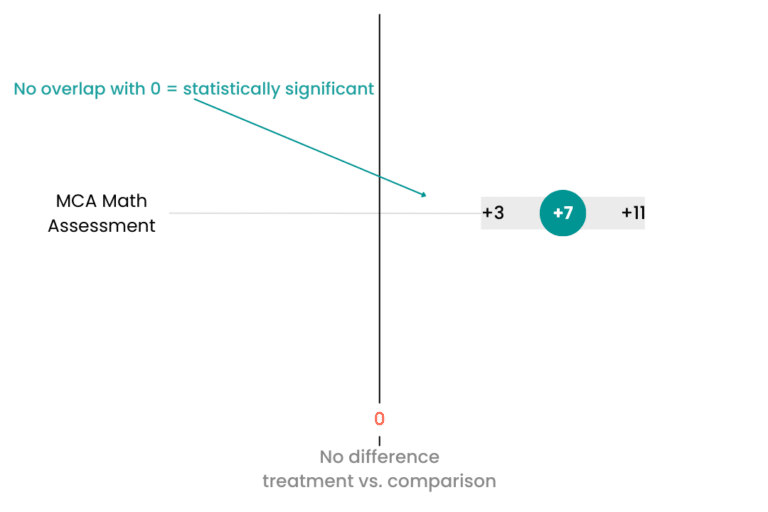

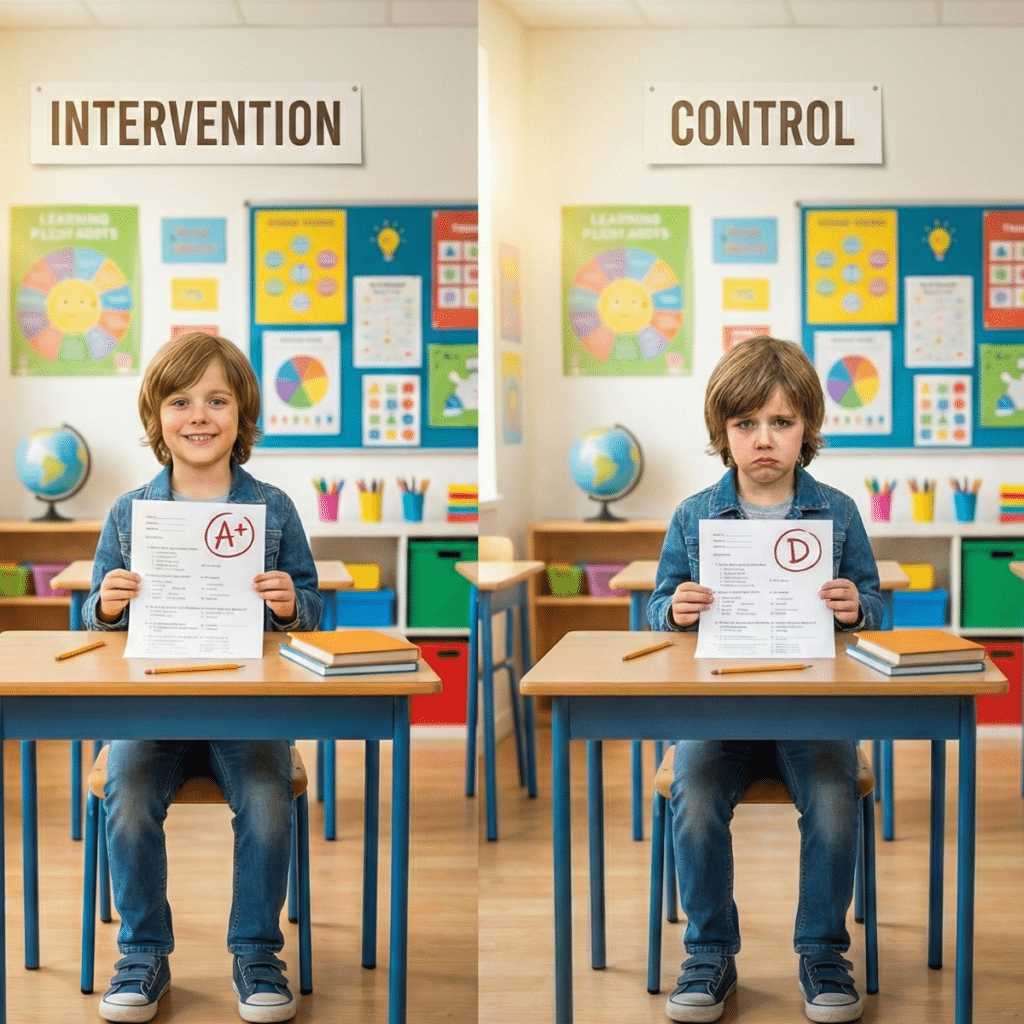

A control group is a group of students who did not receive the program being evaluated. Their outcomes are compared to those of students who did receive the program, often referred to as the treatment group.

The overall goal here is fairness. A fair comparison asks whether students who participated in the program performed better than similar students who did not, given the same environment, timeline, and broader district context.

In an ideal world, students are randomly assigned to treatment and control groups. Random assignment ensures that, on average, the groups are equivalent before the program begins. Any difference observed afterward can reasonably be attributed to the program itself.

In practice, random assignment is often unrealistic in K–12 settings. Ethical concerns arise when withholding support. Scheduling constraints limit flexibility. Program eligibility rules are set long before evaluators are invited into the conversation.

That reality does not invalidate evaluation. On the contrary, it means that you need a different approach.

At the heart of all control group logic is the counterfactual. The counterfactual asks: what would have happened to these same students if the program had never been implemented?

Because we can’t observe that alternate timeline directly, control groups serve as the closest approximation.

For readers who want to see how the field formally defines evidence quality and comparison standards, the What Works Clearinghouse Standards Handbook provides a useful reference point for how impact evidence is evaluated across education research.

Why Pre-to-Post Gains Are Not Enough

Pre-to-post gains feel intuitive because they measure outcomes before the program starts and measure them again after implementation, then compare the difference.

The problem is that this approach quietly assumes nothing else changed. In real schools, everything changes.

Students gain reading fluency simply by aging into more exposure. Teachers respond to assessment data and adjust instruction. New curricular materials roll out. District initiatives overlap. External tutoring programs appear. Behavioral supports evolve.

When outcomes improve under these conditions, it is impossible to know how much of that improvement is attributable to the program being evaluated. This is how programs accidentally take credit for normal growth, how effectiveness gets overstated, and how renewal decisions become vulnerable to scrutiny later.

Consider a common example. Reading scores rise from fall to spring among students using a literacy tool. On paper, the program looks effective. But when those same gains are compared to similar students who did not use the tool, the difference disappears.

Without a control group, that distinction remains invisible.

This is not a technical flaw. It is a conceptual one. Program evaluation best practices exist precisely to prevent these misinterpretations, especially when decisions depend on the results.

The Control Group as the Counterfactual

The word “counterfactual” sounds abstract, but the idea is pretty easy once you understand it.

Imagine pressing pause on reality and asking what would have happened if the program had never existed. Would students have grown anyway? Would the same trends appear?

In controlled lab settings, counterfactuals are easier to approximate. In K–12 education, where students are embedded in complex systems, they are harder to isolate and more important than ever.

That’s why control groups emphasize student-level fairness. Comparisons are strongest when students share the same district, attend the same schools, sit in the same grades, and begin at similar performance levels.

This approach reduces bias rather than completely eliminating the noise.

When done well, it enables more credible edtech evidence of effectiveness and strengthens nonprofit impact reporting. It shifts the conversation from “did outcomes improve” to “did this program change outcomes relative to the status quo.”

That shift is where impact claims become defensible.

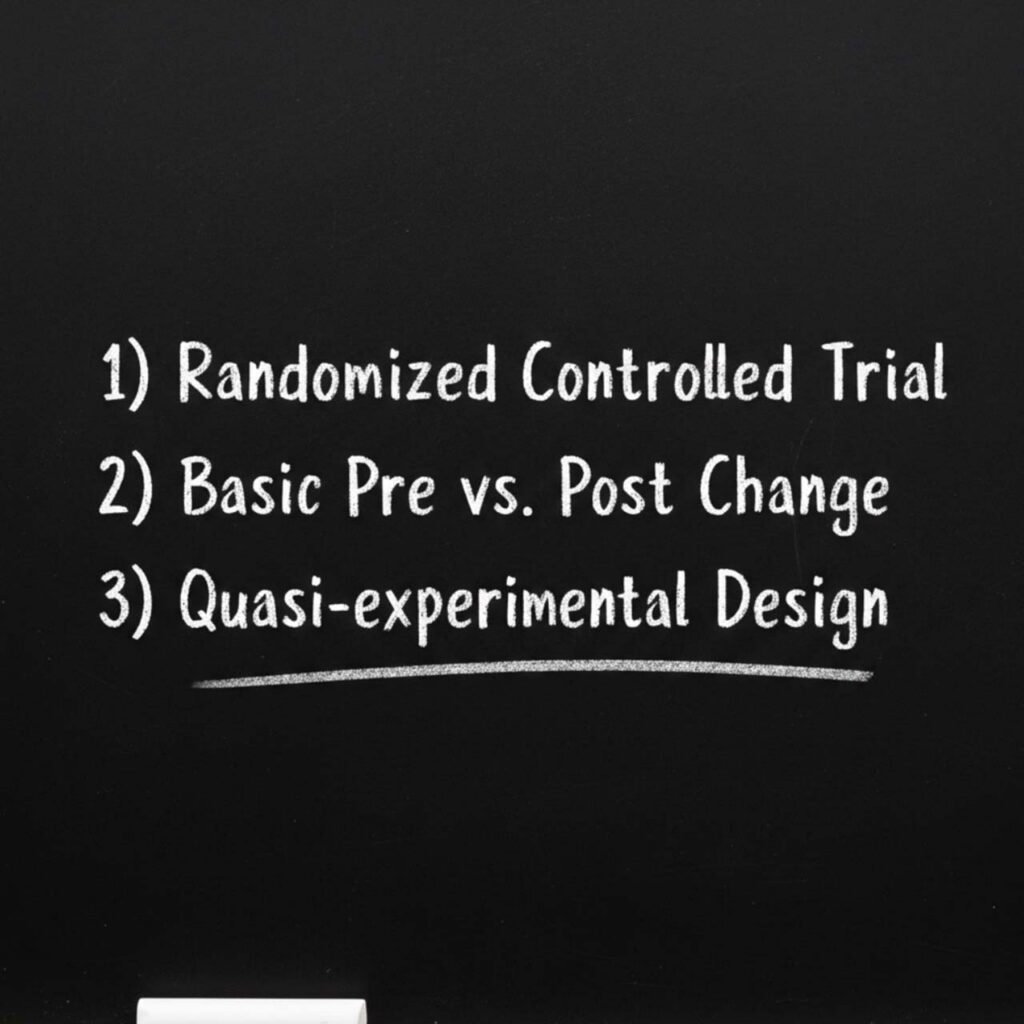

When Randomized Control Groups Aren’t Possible

Most districts cannot randomly assign students to programs. That reality deserves acknowledgment, not dismissal.

Ethical concerns arise when support is withheld. Scheduling constraints limit flexibility. Program eligibility is often determined by several factors, not randomness.

This is where comparison groups enter the picture.

Comparison groups are not randomly assigned but are constructed to resemble the treatment group as closely as possible using existing data. Matching often considers prior achievement, demographic characteristics, attendance patterns, and behavior history.

The goal is to approximate it closely enough to support meaningful conclusions.

Federal guidance recognizes well-designed comparison groups as a valid approach when random assignment is not feasible. The What Works Clearinghouse provides guidance on quasi-experimental designs and how matching can meet evidence standards when executed rigorously.

In practice, this approach reflects how districts actually operate—and it respects constraints while still demanding methodological seriousness.

Why Usage or Dosage Comparisons Are Misleading

One of the most common shortcuts in education program evaluation is comparing high-usage students to low-usage students. It feels like the right comparison because if students who used the tool more showed greater gains, then you’ve got the answer right in front of you.

Unfortunately, that’s not always the case. That logic rarely holds when you make it into a board or investor’s meeting.

Usage is not random. Students who engage more often are often more motivated, have stronger support systems, or receive more teacher encouragement. Teachers may direct higher-performing students to use tools more frequently. Schools may prioritize certain populations for deeper implementation.

When these factors are not accounted for, usage comparisons confound motivation with impact.

This is why low-usage is not a control group. It does not answer the counterfactual question. It often inflates edtech impact claims by attributing existing advantages to the tool itself.

Evaluations that rely solely on edtech usage vs outcome data may look persuasive internally, but they tend to collapse under external scrutiny.

What the Comparison Group Receives Actually Matters

Control groups are often misunderstood as students who receive nothing. In reality, that is rarely how it actually works. In the actual classroom, students are always receiving something: another intervention, teacher-led supports, district initiatives, or even supplemental services. The impact is always relative.

Take, for example, a Tier 2 reading intervention software program. If comparison students receive no additional support, the software may look highly effective. If comparison students receive a different Tier 2 intervention that is also effective, the software may appear ineffective by comparison.

The software didn’t fail. What this means instead is that the comparison context changed.

Impact varies across districts precisely because the status quo varies. Different alternatives produce different baselines. Different supports shift what “adding value” looks like.

It is one of the strongest arguments for rapid-cycle evaluation approaches that measure whether programs continue to add value above an ever-changing landscape.

Why Rapid-Cycle Evaluation Matters More Than Perfect Control

Education systems do not wait for perfect evidence. Decisions happen on calendars, not just your research timelines.

Waiting years for a “gold standard” study often means missing the window entirely. Budgets close. Contracts renew. Programs expand or disappear.

Rapid-cycle evaluation exists to align evidence with decision-making reality—without making you wait years for the right, perfect data.

By leveraging existing data and pragmatic comparison designs, organizations can assess whether programs are adding value right now and adjust strategy accordingly.

This emphasis on timely, usable evidence aligns with how districts interpret ESSA evidence tiers. Quasi-experimental studies can provide meaningful, decision-ready evidence without waiting years for randomized trials. That’s a major win—especially when you’re looking at shifts that could impact students for many years to come.

Waiting for perfect evidence is often the least evidence-based decision of all.

How Control Groups Support Better Decisions

When used well, control groups change how decisions are made across the education ecosystem.

For school districts, they support confident renewal and purchasing decisions. They provide stronger budget defense and clarify student outcome trends that survive public scrutiny.

For EdTech companies, they offer credible, independent proof of impact. They strengthen sales and renewal conversations and reduce churn risk by replacing enthusiasm with evidence.

For educational nonprofits, they produce grant-ready impact data. They strengthen donor reporting and clarify where programs are most effective, enabling smarter growth.

In all cases, control groups transform evaluation from a compliance exercise into a strategic asset.

Control Groups as a Growth Tool Rather Than Judgment

Control groups exist to clarify value. They help organizations understand where programs work best, for whom, and under what conditions, and support continuous improvement rather than post-hoc justification.

Most importantly, they reinforce integrity and transparency in a field where trust matters.

The right evaluation helps you learn how to make better decisions while the opportunity still exists. Control groups are not the enemy of innovation, but they are its safeguard.

When evidence becomes a strategic asset rather than a marketing artifact, organizations gain the confidence to invest, adapt, and grow with clarity. And that is what education program evaluation is ultimately for.