A district launches a math tutoring program for struggling 3rd graders. An edtech company wants to show that its literacy platform improves reading performance. A nonprofit needs evidence that its intervention is helping students succeed.

School districts, edtech companies, and nonprofits working with youth increasingly rely on educational program evaluation to determine whether programs actually improve student outcomes. That’s why understanding statistical significance matters: because it shapes how leaders interpret results and make decisions.

In data-driven decision-making in education, a single number is not enough. Leaders need to know whether the observed difference is likely to reflect a real program effect or whether it may simply be due to random chance.

The Core Challenge in Educational Impact Evaluation

When organizations conduct program evaluation for K-12 products or other initiatives, they run into two unavoidable challenges.

First, they do not have data on every student they ultimately care about.

A district may evaluate a tutoring pilot involving 200 students, but the real question is usually broader than that. Leaders want to know what the results suggest for future students, additional schools, and long-term implementation decisions. They are not focused only on the students included in the original study. They want to understand what results they can reasonably expect going forward.

That means evaluators must rely on a sample, not the full population of students they care about.

Second, evaluators never know the program’s true impact with complete certainty.

Even strong studies with appropriate data analysis produce an estimate, not absolute truth. If the same study were repeated with a different but similar group of students, the result would likely shift somewhat.

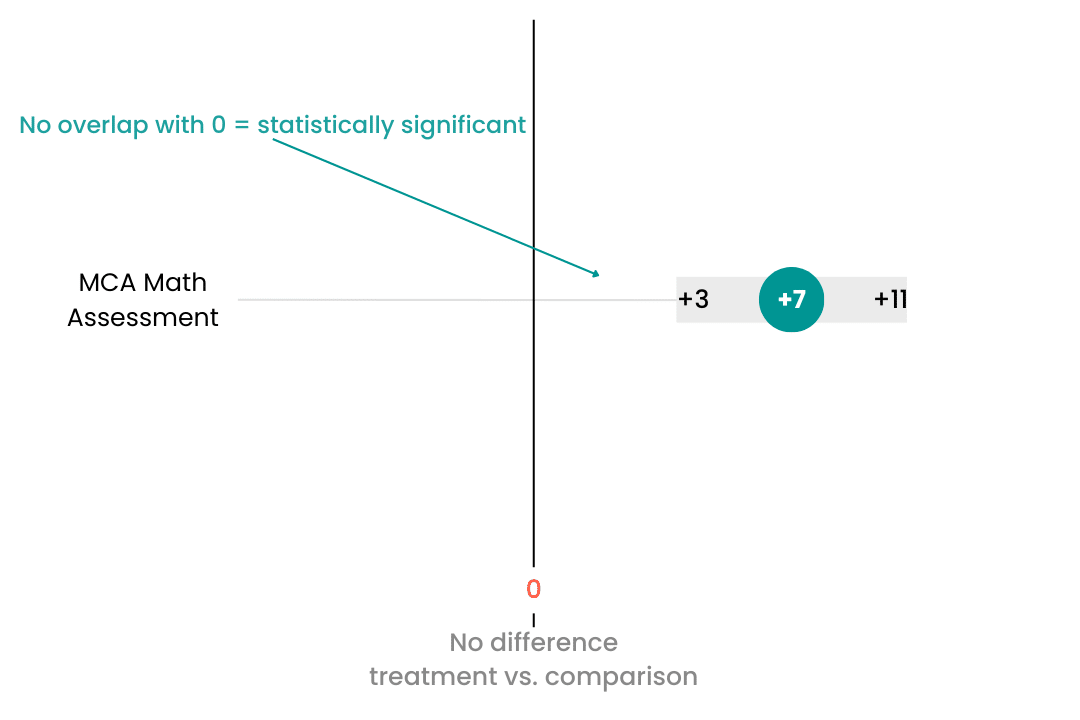

Imagine running the same tutoring evaluation ten different times with ten different samples of students. One study might show a +7 point improvement. Another might show +3 points. Another might show -3 points. All of those results are possible because samples vary.

This variation is not a sign that the analysis is flawed. It is simply part of working with real-world data.

The job of statistical analysis is to help determine whether the observed result is large enough, relative to that natural variation, to count as convincing evidence of a real impact.

What Statistical Significance Actually Means

In plain language, statistical significance asks whether an observed result would be unusually large if the program actually had no real effect.

In many educational program evaluation studies, evaluators begin with what is called the null hypothesis. This is the assumption that the program made no difference. Under that assumption, the expected difference between students who received the intervention and those who did not would be zero.

So if an evaluation finds that the tutoring group grew 3 points more than the comparison group, the next question becomes: Is that +3 unusually large if the true effect is really zero?

If the answer is yes, the result is considered statistically significant. That means the observed difference is unlikely to be explained by chance alone, and the evaluator concludes there is evidence the program likely influenced student outcomes.

If the result is not statistically significant, the conclusion is more cautious. It means there is not enough evidence to confidently say the program produced the observed improvement.

A non-significant result does not mean the program failed. It does not prove that there was no impact. It simply means the evaluation did not produce strong enough evidence to rule out the possibility that the observed difference happened by chance.

A study may fail to reach statistical significance for several reasons. The sample may be too small. The program’s impact may be modest, and therefore harder to detect. Student outcomes may vary widely across classrooms or schools. These are common realities in K-12 education.

So when an evaluation finds a non-significant result, the right takeaway is not “the program does nothing.” The right takeaway is “we do not yet have strong enough evidence to make that claim confidently.”

What Statistical Significance Does Not Mean

This is where many people overinterpret results.

A statistically significant finding does not automatically mean the result is important, meaningful, or worth acting on. It only means the result is unlikely to be explained by random variation alone.

For example, suppose an intervention produces a statistically significant gain of +3 points on a district assessment. That may be real. But is it enough to justify the cost of the program? Is it enough to support expansion across more schools? Is it large enough to matter relative to the district’s strategic priorities?

When thinking about the ROI of a particular product or program, leaders need more than proof that a difference exists. They need to know whether the magnitude of that difference is large enough to justify continued investment. They need to weigh impact against staffing demands, implementation complexity, scalability, and budget pressure.

In other words, statistical significance addresses whether a result is likely real. It does not tell you whether the result is practically meaningful.

That is why interpretation of impact evaluation results cannot stop at a p-value (the number evaluators often use to judge statistical significance). Decision-makers need context. They need to understand the size of the effect, the strength of the design, the quality of the comparison group, and the relevance of the outcome being measured.

Why Clear Evaluation Matters for Decision Makers

For district leaders, nonprofit executives, and edtech executives, the goal of independent program evaluation is to make better decisions. They need answers to practical questions:

Is the program improving student outcomes?

How large is the impact?

Which students are benefiting most?

Is the effect strong enough to justify continued investment?

Those questions require rigorous analysis, but they also require clear communication. Statistical concepts should lead to practical insight, not confusion. The best evaluations do both. They apply sound methods and explain results in plain language that supports real-world decisions.

That is especially important in K-12 environments, where leaders are making high-stakes decisions under budget constraints and time pressure.

From Statistical Results to Real Decisions

Understanding statistical significance is one important step in interpreting impact, but it is only one part of the bigger picture.

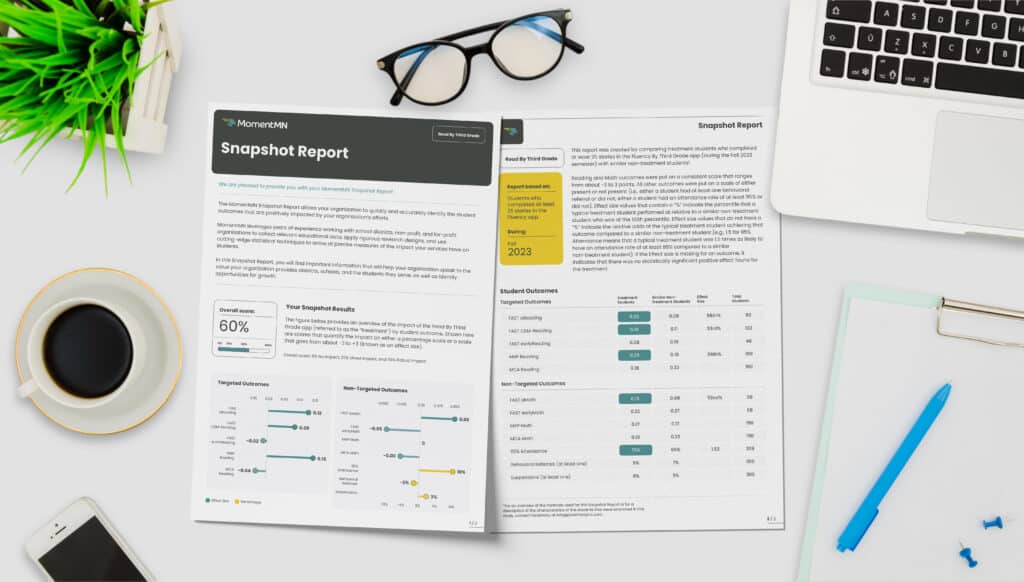

MomentMN Snapshot Reports from Parsimony combine rigorous educational impact evaluation with clear, practical interpretation designed for district leaders, edtech companies, and nonprofit partners. By leveraging existing student data and a rapid-cycle approach, Snapshot Reports provide credible, independent evidence of effectiveness without creating unnecessary burden for staff.

If you need more than a number and want decision-ready insight into whether a program is truly improving outcomes for students, MomentMN Snapshot Reports offer a practical path forward. They help organizations move beyond guesswork toward evidence-based decisions grounded in clarity, rigor, and relevance.

Request your sample report today to see the difference.