You walk into the renewal meeting with a beautiful, color-coded dashboard showing “90% student engagement” and “1,000+ hours logged.” The charts are clean. The trend lines slope upward. The slides feel polished, professional, and ready for a decision.

You expect a handshake; instead, you get a cold stare from the District CFO. To them, your internal data isn’t “evidence.” It’s marketing.

That moment surprises a lot of EdTech teams, especially those who have invested heavily in analytics, reporting infrastructure, and customer success. The dashboard is accurate. The numbers are real. The usage is strong. By any internal standard, the story looks positive.

But budget season changes how information is interpreted. When budgets get tight, districts stop looking at how much a tool is used and start asking if it actually works. And if the only person vouching for the tool is the one sending the invoice, you’ve already lost the room.

That dynamic sits at the center of why internal dashboards, no matter how sophisticated, often stop carrying weight precisely when decisions matter most.

This article isn’t anti-data. And it isn’t an argument that internal analytics are useless. It’s about trust. Specifically, it’s about the difference between internal reporting and decision-grade evidence when public dollars, political scrutiny, and career risk enter the conversation.

What Budget Season Does to Data Trust

Outside of budget season, internal dashboards do exactly what they’re designed to do. They help districts and vendors understand implementation patterns. They surface adoption trends. They flag where training may be needed. They show whether a product is being used at scale or sitting idle.

In those contexts, usage metrics are genuinely helpful. They support operational improvement and day-to-day decision-making.

Budget season introduces an entirely different audience and a very different set of incentives.

Suddenly, the room includes people who are not emotionally invested in the product’s success: the CFO, the finance office, procurement leaders, board members, community stakeholders, sometimes even auditors. These stakeholders aren’t asking whether the dashboard looks reasonable. They’re asking whether the decision to keep funding this tool can be defended publicly.

A district leader might personally believe in a program. They may have championed its adoption. They may have invested time, political capital, and staff energy into making it work. But budget season forces that leader to step out of advocacy mode and into defense mode.

This isn’t about being hostile or adversarial. It’s about fiduciary responsibility and political survival.

Once a renewal decision becomes public, the question isn’t “Did we like this tool?” It’s “Can we justify this expense when something else gets cut?” That shift fundamentally raises the evidence threshold.

Dashboards that worked perfectly well for internal conversations now have to do something else entirely. They have to justify spending.

And that’s where many dashboards fall short.

Usage is not the same thing as outcomes. Engagement is not the same thing as impact. A dashboard can prove engagement. It cannot automatically prove effectiveness.

That cold stare from the CFO isn’t personal. It’s the moment someone realizes, “If I defend this contract and I’m wrong, I own the fallout.”

The Core Insight: The Credibility Gap

This is where many EdTech companies run headlong into what can be called the Academic Trap.

In the “Academic Trap,” EdTech companies feel forced to choose between self-reported data (which is fast but biased) and university-led studies (which are rigorous but take two years).

On one side of that trap is speed. Internal dashboards, case studies, testimonials, and vendor-created reports can be produced quickly and aligned neatly with renewal cycles. They are responsive to sales and marketing needs. They show momentum. They tell a story.

On the other side is rigor. University partnerships, randomized controlled trials, and multi-year longitudinal studies carry real academic credibility. They can support strong causal claims. They are respected by researchers and funders alike.

Unfortunately, they arrive far too late for most real-world decisions.

This trap creates tension across organizations. Sales teams want something concrete they can put in front of districts during renewal conversations. Product teams want feedback that helps them understand where impact is strongest or weakest. Marketing teams want proof that goes beyond “trust us.” District leaders want evidence they can stand behind when questioned.

None of these needs are unreasonable. The problem is that traditional evaluation options force a trade-off that doesn’t align with procurement reality.

District leaders and school boards are under immense pressure to justify every dollar. Board meetings are public. Budgets are political documents. Renewals become talking points, sometimes even campaign fodder. Leaders are expected to defend decisions to people who may be skeptical by default, especially when money is tight.

That’s why they need political armor, not just data.

Political armor is evidence that reduces risk for decision-makers, can hold up under cross-examination, and feels independent enough to be trusted by someone who isn’t rooting for the renewal.

That’s why the voice delivering the evidence matters as much as the numbers themselves.

District leaders need an independent, third-party voice that can look a Board member in the eye and say, “We analyzed their student growth data independently, and the impact is real.”

The moment evidence comes from outside the financial relationship, it becomes usable in a way internal data often isn’t. It can be cited. It can be referenced. It can be defended without caveats.

Without that external validation, your product is just a line item waiting to be cut. That doesn’t mean the product is bad. It means the evidence surrounding it isn’t strong enough to survive scrutiny.

In budget season, neutrality beats enthusiasm every time.

Why Internal Data Starts to Feel Like Marketing

Internal dashboards are typically built for the right reasons. They track implementation, monitor engagement, and even help customer success teams intervene when usage drops. They provide operational insights that keep programs running smoothly.

Yet, budget season asks a different set of questions that require a well-supported answer:

- What outcomes moved?

- Compared to what would have happened otherwise?

- Did the tool create measurable improvement, or did it simply generate activity?

Those questions are not easily answered by dashboards designed around logins, minutes, and completion rates. This is where the conflict-of-interest wall becomes visible, even if no one says it out loud.

The issue is not that vendors are dishonest. Most vendors genuinely believe in their products and want to demonstrate value. The issue is that incentives are visible.

A CFO looks at a dashboard and instantly understands the incentive structure. The organization presenting the data benefits financially if the interpretation is positive. That reality doesn’t require malice to undermine trust. It simply requires awareness.

In that renewal meeting, your internal numbers don’t fail because they’re wrong. They fail because they’re vulnerable to the simplest rebuttal in procurement history:

“Of course your dashboard says it works. You’re selling it.”

That sentence doesn’t accuse anyone of lying. It doesn’t dispute the numbers. It simply reframes the evidence as connected rather than independent, and that can become a real issue.

Once that frame takes hold, it’s extremely difficult to recover credibility with more slides, more charts, or more usage metrics. That’s why you need reports that tell the whole story based on the right evidence, rather than just what appears to prove the point of your proposal.

The Parsimony Twist: Independence at the Speed of Business

When districts hear the phrase “independent evaluation,” many picture something very specific. Most “independent evaluations” are slow, academic behemoths.

They come with long timelines, complex data requirements, and deliverables that don’t fit procurement windows. They often result in reports written for journals or funders rather than decision-makers. They may be methodologically impressive, but operationally misaligned with how districts actually make decisions.

This creates a fundamental mismatch.

District renewal timelines don’t wait for multi-year evidence cycles. Budget calendars are fixed. Board agendas are set months in advance. Decisions get made whether the perfect study is ready or not.

Parsimony flips the script. Instead of asking districts or vendors to choose between speed and credibility, Parsimony designs evaluations specifically for decision windows. Independence without the academic drag. Rigor without the multi-year lag. Decision-grade evidence that arrives while the decision is still open.

We don’t ask you to wait for a multi-year longitudinal study while your contract sits in limbo.

That distinction matters more than it might seem. A contract in limbo is a contract at risk. Momentum dies when decision windows close. Districts make decisions on schedules, not on research idealism.

The goal is not to get rid of traditional research altogether, but to recognize that most budget decisions can’t wait for it, and they need something that actually leads to positive change.

MomentMN Snapshot Reports as the “Third-Party Shield”

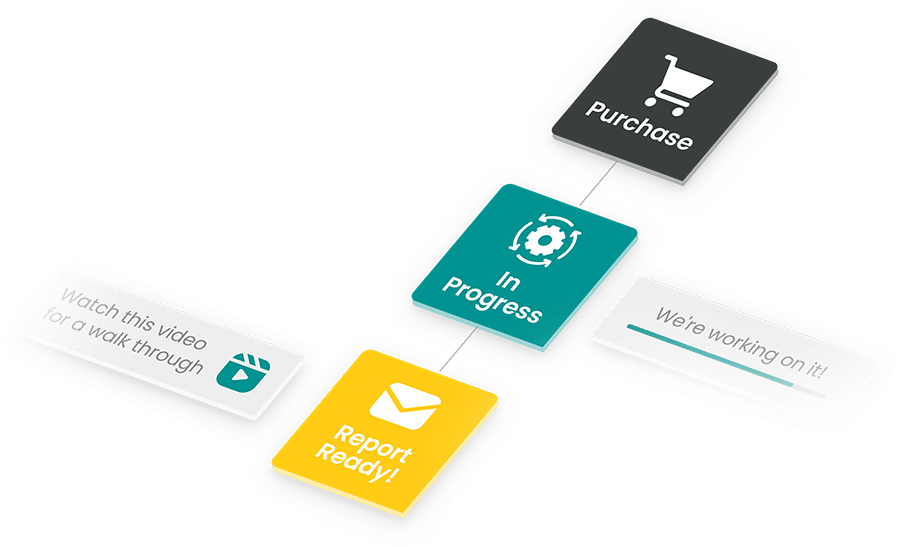

This is where MomentMN Snapshot Reports come in. When Snapshot Report results are positive, they serve as a powerful third-party validation that districts can use to justify renewal decisions. In practice, the report becomes a “Third-Party Shield”: independent evidence that helps protect a district from dropping a product that is demonstrably benefiting its students.

That shield is delivered in just 14 days.

That timeline isn’t arbitrary. Budget season doesn’t offer much runway. Renewal windows can be 30 days. Sometimes less. District leaders need something credible before the decision goes to the board, not after.

By using the district’s own existing data, MomentMN Snapshot Reports remove a major barrier to evaluation. There’s no new data collection, no extra surveys, and no added burden on already stretched teams. The analysis is grounded in the measures districts already trust because they already use them.

This approach sends an important signal. District leaders trust their data more than vendor-selected metrics. When an evaluation starts with district-owned data, it feels less like persuasion and more like analysis.

From there, MomentMN Snapshot Reports provide a rigorous, objective analysis that carries the weight of a PhD-led evaluation. The credibility comes from independence combined with methodological seriousness. The result is something leaders can cite without flinching, even in contentious settings.

Just as importantly, it fits within your 30-day renewal window. You don’t have to wait months—even a year—to prove that the change that’s long overdue needs to happen.

Timing is a form of rigor. Evidence delivered after a decision is not useful. Decision-grade evidence is built to arrive when it can influence outcomes.

And the outcome here isn’t just a number. We provide the numbers you need with the trust required to turn a “maybe” into a signed contract.

That changes how conversations unfold, shifts the tone of renewal meetings, and gives district leaders room to say “yes” without feeling exposed. It allows CFOs to defend decisions based on independent analysis rather than vendor optimism.

From Dashboards to Defensible Decisions

When budgets tighten, dashboards lose persuasive power. Not because they’re useless, but because they’re conflicted. What they’re looking for is proof that their decisions will have a positive, lasting impact.

Independent, third-party validation is what turns internal signals into board-ready proof. It’s what allows districts to move from “We think this works” to “We can defend this decision.” And if it can be done in thirty days, just think of how many more budgets will begin to account for what actually needs to change.

If you want to see what that kind of evidence looks like in practice, and how it changes renewal conversations, visit our website to get a sample Snapshot Report.