A nonprofit leader presents results from a recent program. An EdTech team shares early findings from a district pilot. A school system reviews whether to renew a contract.

In each case, the update sounds promising: “We saw a statistically significant impact on student outcomes.”

Then someone asks the question that determines what happens next: “Is that actually meaningful?”

This is where many conversations stall. Leaders are often given proof that something happened, but not clarity on how much it mattered. And without that, it becomes difficult to justify funding, support a renewal, or make a confident decision.

This is where effect sizes become essential. In education impact evaluations, effect sizes translate results into something decision-makers can actually use. It turns data into direction.

The “So What?” Problem in Program Evaluation

Most evaluation conversations begin with statistical significance. It answers a basic question: Did the result likely happen because of the program rather than chance?

That matters, but it is not enough. Statistical significance tells you whether it is real. It does not tell you whether the impact was large enough to matter.

Effect size answers that second question. A simple way to think about it:

- Statistical significance is the on/off switch

- Effect size is the volume knob

For nonprofit leaders, this distinction affects funding decisions. A statistically significant result may still represent a very small improvement that is difficult to justify to a funder.

For EdTech leaders, it shapes sales and renewals. Districts are not just asking whether a product works. They want to know how much it improves student outcomes compared to alternatives.

Strong program evaluations focus on both. But when decisions are on the line, effect size is what answers the “so what?” question.

What Is an Effect Size (In Plain English)?

Effect size measures the magnitude of impact. It answers questions like:

- How much better did program students perform relative to their non-program peers?

- How much more likely were they to succeed relative to their non-program peers?

Instead of simply showing that a program had an effect, it shows how large that effect was.

This is what makes an effect size so useful for data-driven decision-making in education. It provides a common language for comparing results across programs, products, and contexts.

Importantly, leaders do not need to calculate effect sizes themselves. What they need is a clear interpretation. The value comes from translating technical outputs into practical insight.

That is the difference between reporting results and making them actionable.

Cohen’s d: The “Standard Ruler” for Student Achievement

One of the most common ways to express effect size in education is Cohen’s d. It is used for continuous outcomes such as:

- Course Grades

- Standardized Test scores

- GPA

Cohen’s d converts results into a common unit called a standard deviation. This allows comparisons across different tests, districts, and studies, even when the original scales are not the same.

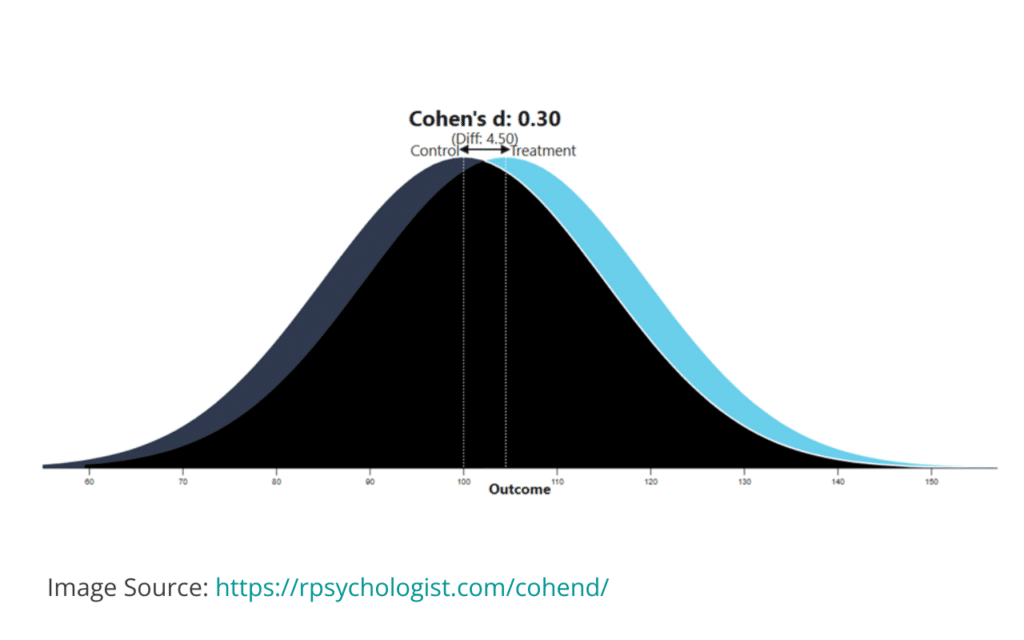

For example, a report might state: Students who went through a literacy program outperformed their non-program peers on a district assessment by a Cohen’s d of 0.30.

In a real evaluation context, this is the kind of result that moves beyond internal reporting. It becomes credible evidence of effectiveness that can support a renewal decision, strengthen a grant application, or differentiate a product in a competitive market.

On its own, that number may not mean much to a non-technical audience. But translated into plain language, it represents a measurable and meaningful improvement in student outcomes.

One way to visualize this is to imagine two groups of students:

- One group used the program (the “Treatment” group)

- One group did not (the “Comparison” or “Control” group)

Each group forms a bell curve based on performance. Cohen’s d measures the distance between those curves. The larger the distance, the greater the impact.

In education, context matters when interpreting these values:

- Around 0.20 is often considered small but meaningful

- Around 0.40 or higher is typically seen as a strong effect

This is why Cohen’s d is often central to explaining effect sizes in education in a way that supports decision-making. It provides a consistent benchmark for understanding impact on different student outcome metrics.

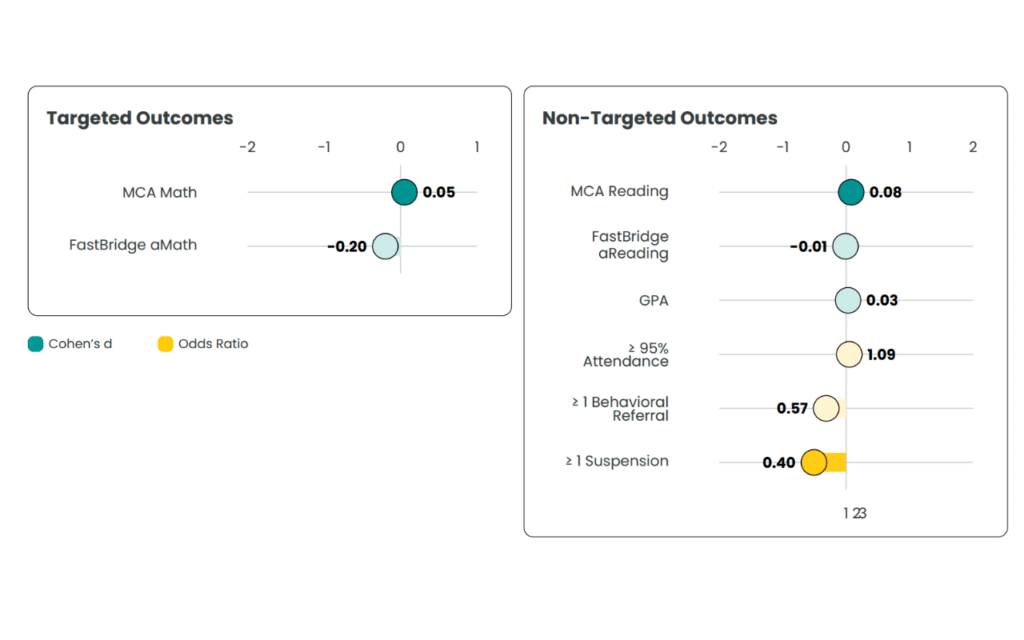

Odds Ratios: Turning Outcomes Into Relative Impact

Not all outcomes are continuous. Many important questions in education are binary:

- Did a student pass or not?

- Did they graduate or not?

- Were they chronically absent or not?

To measure impact on these types of outcomes, odds ratios are often used. An odds ratio tells us if the “likelihood” of success is higher for one group than another.

- An Odds Ratio of 1.0 means there is no difference between groups.

- Anything above 1.0 means the program group is more likely to succeed.

- Anything below 1.0 means they are less likely.

For example:

- Imagine a literacy program has an odds ratio of 55. This indicates that students in the program have 55% higher odds of becoming proficient than students who weren’t in the program. It shows the program is moving the needle in the right direction.

You may be thinking, but why don’t we just report the difference in percentages of proficient students between the groups (e.g., 75% proficient for the treatment group vs. 66% proficient for the comparison group means a +9% boost in proficiency)? That may make sense.

But what if your outcome is a rare event that you’re trying to impact, and you find that 3% of the treatment students were suspended compared to 7% of the comparison students? Does reporting a -4% drop appropriately reflect the program’s impact on suspensions? Is that a meaningful decrease? For which outcome did your program have the biggest impact (proficiency or being suspended)?

The odds ratio (of 0.41) would tell you that the treatment students had 59% lower odds of getting suspended (calculated as 1.0 – 0.41 = 0.59), suggesting your program has a bigger impact on suspension than proficiency. Odds ratios have the added benefit that they also work well with rare events.

For nonprofit leaders, this can strengthen grant applications by clearly demonstrating impact.

For EdTech companies, it becomes compelling evidence of effectiveness that supports both marketing and sales conversations.

Rather than relying on general claims, leaders can point to clear, quantified differences in outcomes.

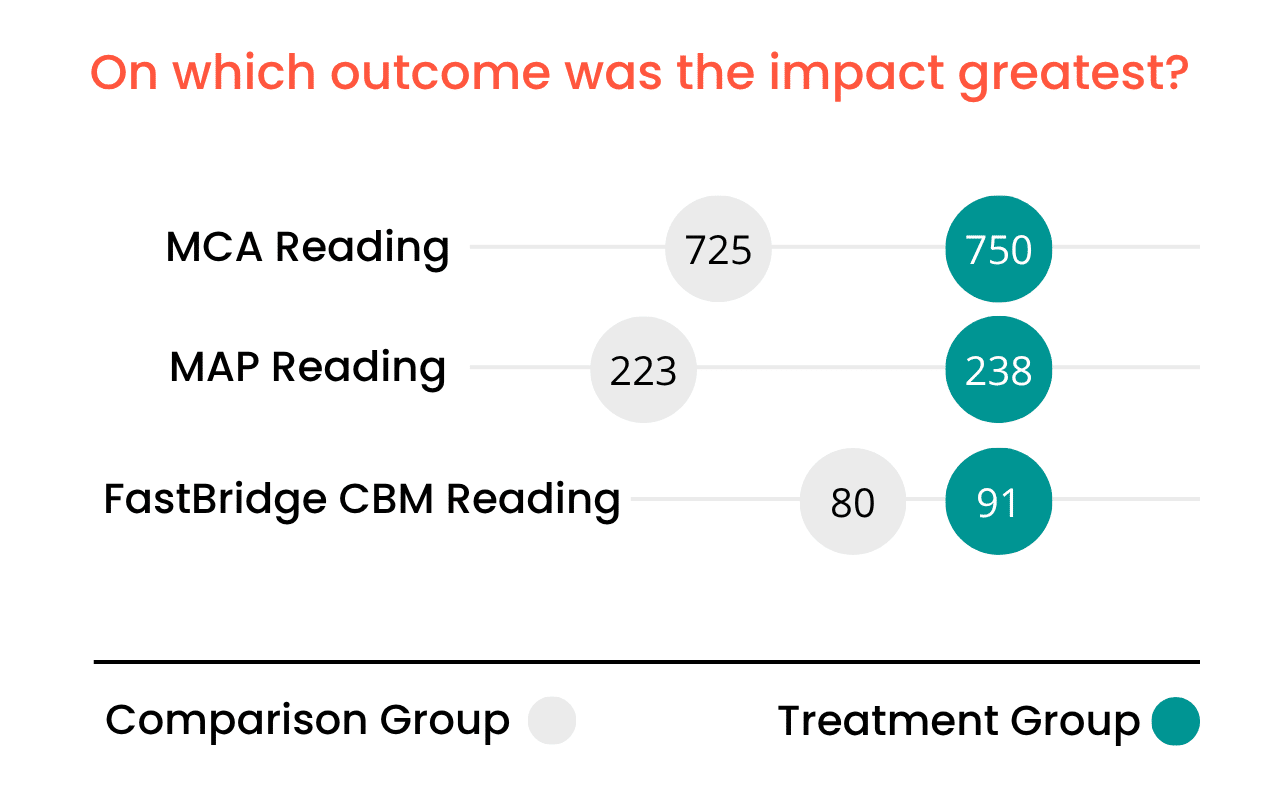

The Power of Comparison: Where Effect Sizes Become Strategic

Effect sizes become even more valuable when used for comparison.

Within a single program, it can help identify what is working best. For example, a solution may show a stronger effect on fluency (0.45) than comprehension (0.15). That insight can guide product development or program refinement.

Across programs or studies, effect sizes provide a common reference point.

It also helps organizations move beyond what can sometimes feel like an “academic” standard. A rapid-cycle evaluation conducted in a real-world setting can produce effect sizes that align with findings from larger, slower studies.

This reinforces a practical approach to educational impact evaluations. Rigor matters, but so does timing. Leaders need credible insight when decisions are being made, not years later.

The Power of an Effect Size

For EdTech leaders, effect sizes change the nature of the conversation. Instead of focusing on cost alone, the discussion shifts to impact:

- How much student growth is tied to this product?

- How much of a decline can we expect if it were removed?

This supports renewals, strengthens positioning, and contributes to program ROI in education.

For nonprofit leaders, effect sizes turn stories into evidence. They allow organizations to move from describing what they do to demonstrating measurable outcomes. This builds credibility with funders and supports long-term sustainability.

For district leaders, effect sizes contribute to more defensible decisions. They support evidence-based decision-making in schools by connecting investments directly to student outcomes.

Across all three audiences, the underlying value is the same. Effect sizes provide a clear, consistent way to understand impact.

Bringing It Back to the Big Idea

An effect size is often presented as a technical concept. In practice, it is something much simpler.

It is the common language of impact. It helps leaders move from looking at data on different outcomes to navigating decisions with clarity. It allows them to move from knowing that something worked to understanding how much it worked. And that difference is what supports better decisions.

Leaders do not need to become experts in statistical formulas. They need access to clear interpretation and reliable evaluation. A rapid-cycle, independent approach provides that insight within real-world timelines.