Outcomes-based contracting has obvious appeal in K–12. In many ways, it feels like the accountability model district leaders have been waiting for. Instead of paying for promises, implementation milestones, or a polished pitch deck, the district pays for outcomes.

That instinct is sound. In a climate shaped by budget pressure, board scrutiny, and rising expectations for evidence-based decision making, outcomes-based contracts signal stewardship. They tell stakeholders the district is serious about tying dollars to student impact.

But every strong accountability model still depends on one uncomfortable question: who decides whether the outcome was actually achieved?

If the vendor is selecting the metrics, analyzing the data, and presenting the findings, the district may have a contract with outcomes language, but not true protection.

You would not let one team bring its own referees to a championship game. District purchasing should not work that way either. If real dollars are tied to real outcomes, the district needs a credible referee watching the clock.

Why Vendor Self-Reporting is Not Enough

Most vendors are not trying to mislead districts. That is not the point. The point is that incentives matter.

When renewal, expansion, and reputation are on the line, vendors have every reason to highlight the numbers that make the story look strongest. That often means leading with usage metrics such as logins, time on platform, implementation completion, or teacher participation. Those measures may reflect adoption. They do not, by themselves, prove student impact.

A product can be used heavily and still fail to move outcomes that matter to the district.

That distinction matters more in outcomes-based contracts than in almost any other arrangement. Once payment, renewal, or expansion is tied to “what worked,” the district needs evidence that can survive hard questions from finance leaders, superintendents, school boards, and the public. Otherwise, the district is left defending a high-stakes decision without credible evidence.

Federal education guidance has pushed the field toward stronger evidence standards for good reason. Under ESSA, stronger claims require stronger study design, not just stronger marketing language.

That is where independent rigor stops being a nice extra and starts becoming the real safeguard.

What Rigorous Evaluation Actually Looks Like

If a district is going to tie dollars to outcomes, the evidence behind those outcomes has to be credible, district-specific, and clear enough to defend in a board room.

1. An Independent Third-Party Has to Own the Analysis

First, it needs an independent third-party evaluator. Independent evaluation reduces the obvious conflict of interest built into vendor self-reporting. More importantly, it gives district leaders something more useful than reassurance: defensible credibility when budgets, renewals, and public scrutiny converge.

When findings come from an outside evaluator with no stake in the renewal decision, they carry more weight with boards, taxpayers, and procurement stakeholders.

2. The Evidence Has to Come from Your Students, Not Someone Else’s

Second, the evaluation has to use the district’s own student data. National studies, white papers, and generalized case studies can be helpful background, but they do not answer the question district leaders actually face: did this work here, with our students, in our schools, under our implementation conditions?

That is the kind of evidence that matters when a district is deciding whether to renew, expand, defend, or walk away.

3. The Design Separates Real Impact from Noise

Third, the design has to move beyond simple before-and-after comparisons. In education, outcomes shift for all kinds of reasons unrelated to the program itself. That is why stronger evaluations often rely on quasi-experimental designs, which compare participating students to similar non-participating students in order to estimate the lift associated with the intervention.

In real school systems, random assignment is often unrealistic. That is why quasi-experimental design matters. It gives districts a practical way to examine cause-and-effect questions using the conditions they actually operate in.

This is where baseline controls and matching matter. The real question is whether students outperformed what we would reasonably expect given where they started. That is also what makes this kind of design so useful in rapid-cycle evaluation. District leaders rarely have the luxury of waiting two years for perfect evidence. They need credible answers in time to make real decisions.

The Institute of Education Sciences regularly points to matched comparison approaches as a way to compare participating students with similar students who did not receive the program. That logic is essential in K–12 because districts are rarely making decisions in laboratory conditions. They are making them in real schools with shifting schedules, uneven implementation, student mobility, and multiple initiatives happening at once.

4. The Outcomes Measured Should Match the District’s Priorities

Fourth, the outcomes being measured need to align with district priorities, not just vendor convenience. If the district is accountable for attendance, behavior, assessment performance, course completion, or other strategic indicators, then those are the metrics that should anchor the evaluation.

Vanity metrics may be easier to collect and easier to celebrate, but they do not help a district defend a decision when the real question is whether students benefited in ways the system actually tracks.

5. The Analysis Reflects the Real World

Fifth, the analysis should reflect the real world, not a cleaned-up success story. That is why honest evaluation often depends on intent-to-treat thinking rather than a cleaned-up sample of only the strongest users. In plain language, that means looking at everyone who started the program, not just the students who used it perfectly or stayed engaged long enough to make the results look best.

District leaders do not buy ideal implementation. They buy real implementation. That includes uneven usage, incomplete participation, and all the friction that comes with implementation fidelity in the real world.

The evidence should match that reality. No leader wants to defend a costly renewal decision with evidence that collapses under scrutiny.

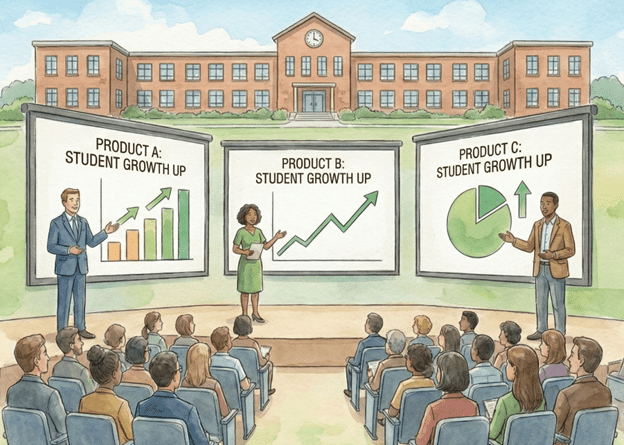

Why This is Better for Districts and Vendors

Independent rigor protects districts, but it also helps good vendors.

For district leaders, it turns a contract review from a debate over competing narratives into a decision anchored in evidence. That is how a budget starts to look less like a cost center and more like an investment portfolio. It helps move the conversation from “we think this is helping” to “here is what happened with our students, compared with similar students, on outcomes we actually care about.”

For vendors, independent evaluation creates something self-reported success rarely can: trust at the exact moment trust matters most. If a product truly is helping students, third-party evidence makes that claim more persuasive in renewals, procurement conversations, and future sales. It gives strong vendors a far more durable case than dashboards, testimonials, or usage reports ever could.

It is the difference between saying your product works and being able to show that it worked under real district conditions. Done well, this is not a gotcha exercise. It is a shared reality check that helps both sides make better decisions for students.

That is why independent rigor should not be framed as adversarial. It is not about trying to catch someone failing. It is about making sure the evidence is strong enough to support action.

Outcomes-based contracting can be a powerful tool, but only if the outcome is measured in a way both parties can stand behind.

Without independent rigor, districts are still guessing. They are just guessing in more formal language.

If the goal is real accountability, the evaluation cannot be self-graded. It needs a referee.

Want to see what independent, district-specific impact evidence actually looks like? Request a copy of a MomentMN Snapshot Report and experience how a product or service’s impact can be presented clearly, rigorously, and with minimal burden on your team.