If you are leading a district today, the problem is rarely a lack of data. In many cases, it is the opposite.

Dashboards tracking attendance, assessment scores, and intervention usage. Spreadsheets. Vendor reports. Internal updates. On paper, it looks like everything you need to make strong decisions is already there.

And yet, when you sit in front of your board, and someone asks the most important question: which of these programs actually moved the needle on student outcomes?

This is where many district leaders stall. There is no shortage of information. What is often missing is confidence in what that information actually means. This is the gap many districts face in data-driven decision-making in education.

You’re being asked to make real decisions about curriculum, tools, and investments. Decisions that shape students, teachers, and the direction of your district. And too often, the data you have does not provide the clarity needed to fully stand behind those choices.

Your role is bigger than managing spreadsheets. You are stewarding opportunity. Every budget decision shapes which students get access to support, which programs continue, and where your district places its confidence.

Most districts are working with tools that tell them what already happened. Dashboards and spreadsheets are great at that. They show patterns. Trends. Activity over time. They’re the map after the trip. But what they don’t do is tell you why something worked or what’s likely to happen next.

That’s where statistical models come in. They act more like a GPS. They help you understand what’s actually driving outcomes and how to stay on the right path moving forward.

And that difference changes everything, especially when you’re trying to make smarter, more defensible decisions about your district’s budget.

Why Dashboards and Spreadsheets Aren’t Enough

To be clear, dashboards are useful. They help organize information. They make it easier to see patterns. They give teams a shared view of what’s happening across the district. But they have a limitation that’s easy to overlook: they’re always looking backward.

Let’s say your dashboard shows that students who used a certain literacy program scored higher on reading assessments.

That may seem encouraging. But there’s an important question sitting underneath that result:

Did the program actually cause that improvement? Or were those students already more likely to perform well?

Dashboards can surface correlation. They cannot establish causation. And when decisions are based on correlation alone, things can get risky. Programs that look effective on paper might not hold up when you take a closer look. This is where traditional approaches to being “data-driven” often fall short.

And this is also where districts can fall into a costly trap. Investments get reinforced because they appear to be working, not because they’ve been clearly shown to work. Dashboards show you activity. Models show you impact.

On top of that, student outcomes are influenced by a lot of overlapping factors. Prior achievement. Attendance. Background. Teacher experience. Engagement.

When those factors are not accounted for, the picture becomes noisy, and the conclusions become less reliable. That noise can make results feel right, even when they are not.

So how do you move from “this looks promising” to “we know this is working”? That’s where statistical models come in.

What Is a Statistical Model?

Statistical models are just a structured way of figuring out how different factors contribute to an outcome.

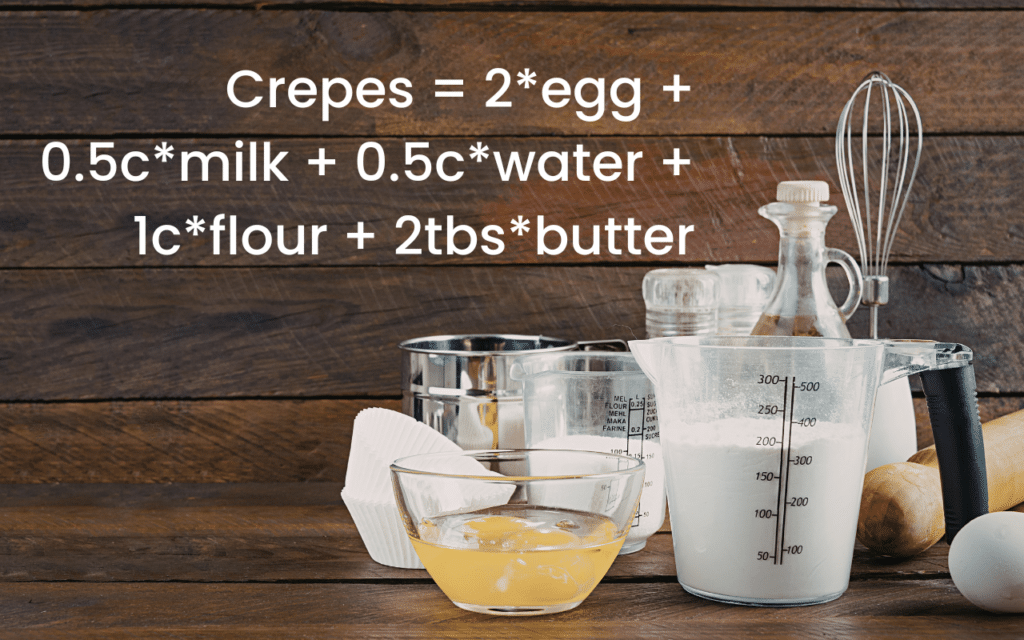

A helpful way to think about a statistical model is as a recipe. The outcome is the final dish. In a district setting, that might be something like third-grade reading growth. The ingredients are everything that could influence that result. Attendance. Prior scores. Time spent using a program. Teacher experience. Classroom environment.

A statistical model looks at all of those ingredients together and asks: How much does each one actually matter?

In simple terms, it works like this:

Outcome (Y) = Weight₁(Factor A) + Weight₂(Factor B) + Error.

If the outcome is third-grade reading growth, the model helps estimate how much weight to give to factors like attendance, prior achievement, minutes using an EdTech product, or teacher experience.

What makes this powerful is how it uses your district’s data to estimate those relationships. It brings a more practical approach to student data analysis, focused on decisions rather than reporting.

For example, instead of just observing that students who used a literacy tool performed better, the model compares them to similar students who didn’t use it. The word “similar” means that the students being compared share a comparable starting point, comparable characteristics, and a similar context.

Now the comparison is more meaningful. And the result might show, for example, that students who used the program gained 3 more points in reading compared to similar students who did not.

That is a very different level of insight. You’re no longer guessing based on patterns. You’re starting to understand what’s actually driving the outcome. This is what more rigorous education impact evaluation methods are designed to do in practice.

Two Reasons Districts Need Models: Understanding and Prediction

1. Explaining the Relationship (The “Why”)

The first reason districts need models is clarity. A statistical model helps you answer a question that dashboards alone can’t:

When everything else is controlled for, is this intervention truly driving improvement, or does it only appear effective because the students using it were already better positioned to succeed?

Instead of relying on surface-level trends, you’re able to isolate the impact of a specific intervention. You can see whether a math program is truly driving gains, or if the results were influenced by other factors.

That kind of insight strengthens conversations with your team, your vendors, and your board because it gives you something firmer than a trend line. It gives you evidence. It also supports stronger decision-making across the district.

2. Predicting the Future (The “Early Warning System”)

Statistical models don’t just explain the past. They help you anticipate the future. This is where predictive analytics comes into play.

For example, models can help identify students who are at risk of falling behind before it happens. That gives your team a chance to step in earlier, when support is more effective and less intensive.

They can also identify bright spots. These are the classrooms, schools, or programs that are outperforming expectations. That gives district leaders a chance to study what is working and scale that success intentionally, rather than guessing and trying to replicate it blindly elsewhere.

So you’re not just reacting to problems. You’re getting ahead of them. And you’re building on success intentionally.

The MomentMN Snapshot: Models in Action

This is where the MomentMN Snapshot Report can put these models to practical use.

Instead of asking districts to collect new data or run complex analyses internally, it works with the data you already have. It combines that with information about which programs your students are using. In effect, it functions as a form of independent program evaluation for K–12 without adding burden to your internal team.

Then it applies statistical models to estimate impact. The goal is not to overwhelm district leaders with analysis. It is to translate rigorous analysis into clear answers.

You get a clear, focused report that answers a direct question: Is this program working, and by how much?

No digging through multiple dashboards. No trying to connect the dots across different reports. Instead, they receive a focused view of what is making a measurable difference.

It shifts the conversation from “We think this is helping” to “We have evidence that this is making a measurable impact.”

Building Your “Bulletproof” Portfolio

A strong district budget isn’t built on how much data you have. It’s built on how clearly you understand it.

When budgets tighten, every decision matters more. You’re asked to justify what stays and what goes. And those decisions don’t just affect numbers. They affect students.

Districts that rely on statistical models are in a stronger position when those moments arrive. They can point to what’s working, explain why, and make adjustments with confidence.

The most effective leaders do not simply have the most data. They have the most clarity.

If you are ready to move beyond reporting outcomes and start understanding what is driving them, the next step is simple: review a sample Snapshot Report. It is one of the clearest ways to see what these models can reveal about which programs are truly moving the needle for students.

Because when you understand what is actually working, everything else begins to align around it.