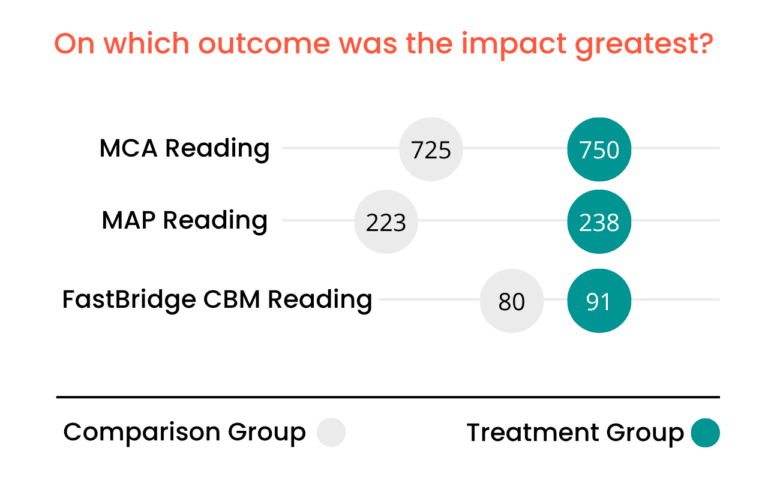

A school district is reviewing whether to renew an edtech contract. A nonprofit organization is preparing a major grant application. A product team at an educational technology company wants credible evidence that its product improves student outcomes.

In each of these situations, the same question eventually emerges: How do we know this actually works?

Organizations across the K–12 sector increasingly rely on educational program evaluation to answer that question. The U.S. Department of Education’s Institute of Education Sciences notes that program evaluation is essential for assessing how programs are implemented and whether they improve student outcomes.

However, there are situations where internal analysis alone is not enough. When credibility or technical rigor becomes critical, bringing in an external evaluator can provide a more trustworthy picture of program impact.

Understanding when to involve outside expertise, and how to prepare for that collaboration, can turn evaluation from a reporting exercise into a meaningful decision-making tool.

What an External Evaluator Brings

An external evaluator is an independent third party who assesses whether a program, product, or intervention improves student outcomes. Federal education guidance defines independent evaluation as analysis conducted separately from the organizations implementing the program.

This independence provides several advantages. External evaluators bring specialized expertise in education impact evaluation methods and increase credibility with external audiences who often trust third-party findings more than internal reports.

Most importantly, effective evaluators focus on actionable insight. The goal is not simply to produce a technical report, but to generate evidence leaders can use to guide decisions about programs, investments, and strategy.

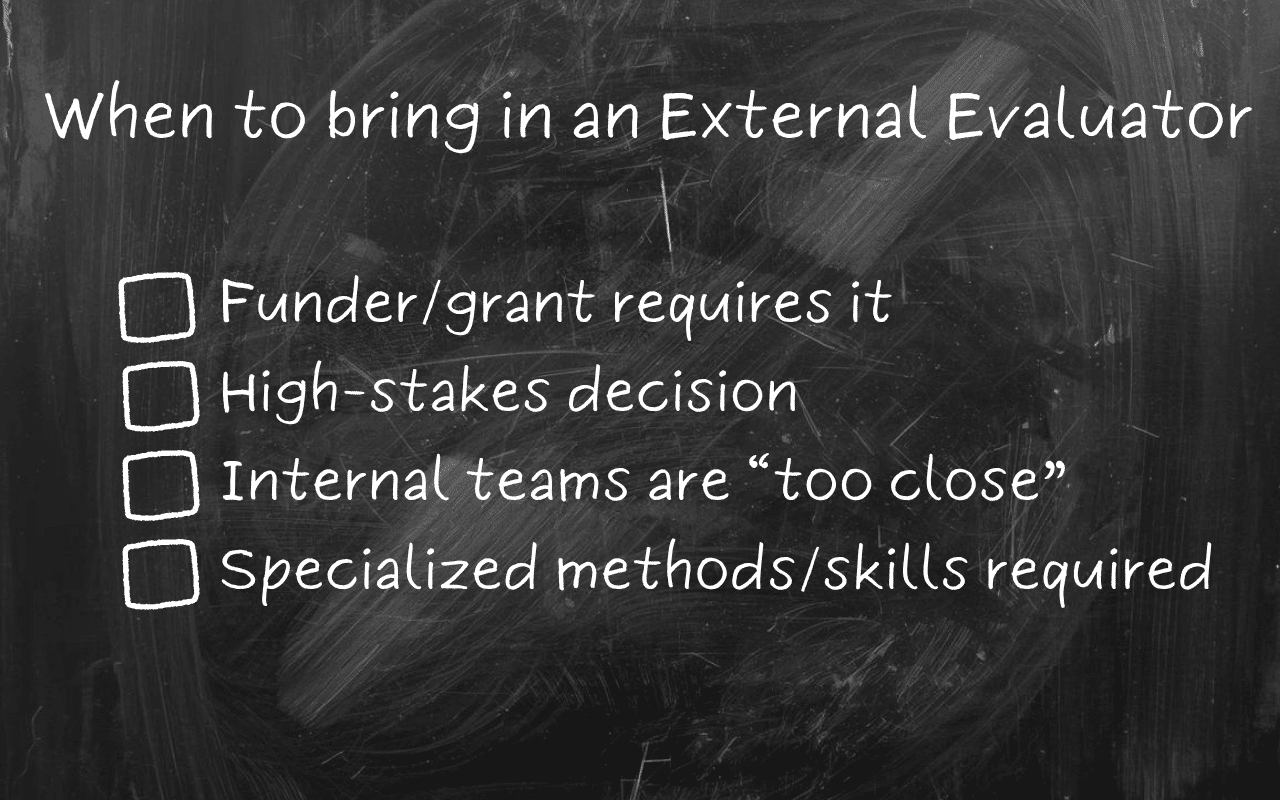

Four Signs It Is Time to Bring in an External Evaluator

Internal monitoring plays an important role in many organizations. However, several situations make external evaluation especially valuable.

1. A funder or grant requires an independent evaluation

Many foundations and government grants require independent evaluation to ensure impartial evidence of program impact.

Internal reporting may help track implementation, but it often does not meet the credibility standards required by external funders. Independent evaluation demonstrates that results were assessed objectively and using appropriate methods.

For nonprofits and school districts seeking grant funding, external evaluation can therefore become a critical part of demonstrating program effectiveness and strengthening funding proposals.

2. The decision is high stakes

In K–12 settings, the most useful evaluation is rarely the longest or most complex. It is the one that gives leaders credible evidence in time to make a real decision. Some evaluations influence major organizational decisions.

A district may be determining whether to renew a large software contract. A nonprofit may be deciding whether to scale a program to new districts. An edtech company may want credible evidence to support expansion or strengthen its sales strategy.

More importantly, credible evaluation does not always require waiting years for a large-scale study. In many cases, leaders need rigorous insight within the timeframe of real-world decisions. Well-designed rapid evaluations can provide meaningful evidence without delaying action.

3. Internal teams are too close to the work

Teams responsible for implementing a program may face pressure to justify existing investments or defend ongoing initiatives. Organizational dynamics can influence how results are interpreted.

An external evaluator can help reduce those concerns. Because they are independent of program implementation, they can analyze the data with greater neutrality.

This independence often makes it easier for stakeholders to trust the findings, even when the results are mixed or unexpected.

4. The evaluation requires specialized methods

Some evaluation questions require methods that go beyond routine data analysis.

Estimating program impact often involves comparing outcomes between participating students and similar students who did not receive the intervention using approaches such as multivariate matching or other quasi-experimental designs.

These approaches fall within the broader field of educational impact evaluation methods and may require expertise that internal teams do not regularly possess.

In those cases, partnering with a third-party evaluator can ensure the study design and analyses meet professional standards.

How to Set Up an Evaluation for Success

Once an organization decides to bring in an external evaluator, preparation becomes essential. Clear expectations at the start help ensure the evaluation produces useful findings.

Start with purpose

Many organizations begin by asking what type of evaluation design they should use. A more productive starting point is clarifying the purpose of the evaluation.

Organizations may want to improve a product or program, support renewal decisions, strengthen marketing claims, secure grant funding, or build evidence that meets ESSA evidence requirements.

Clear goals lead to better evaluation questions. Strong evaluations typically focus on a small number of specific questions, such as:

- Does the program improve student achievement?

- Which student groups benefit most?

- Does implementation intensity influence outcomes?

- Was the program implemented consistently across sites?

These questions guide the design of the evaluation and help ensure the analysis produces meaningful answers.

Be clear about constraints

Academic calendars, testing schedules, data access policies, and privacy requirements all influence what is feasible. Communicating these constraints early allows evaluators to design studies that are both rigorous and practical.

Spell out data access and expectations

Organizations should also clarify what data is available and how it can be shared. In many cases, the most practical evaluations rely on data districts already collect rather than creating new reporting demands for staff.

This may include program participation records, historical assessment data, demographic information, or attendance data. A clear data management plan should address data security, ownership of raw data, and how findings will be published while protecting student privacy.

Define deliverables, timeline, and budget

Clear expectations about deliverables also improve the evaluation process.

Some audiences require detailed technical reports, while others benefit from executive summaries or stakeholder presentations. Evaluation timelines should align with the academic calendar, including baseline measurement, implementation periods, and outcome data availability.

Budget expectations must also be realistic. Organizations sometimes hope to conduct highly complex studies with limited funding. Transparent budget discussions allow evaluators to propose designs that balance rigor with feasibility.

What to Ask for in an RFP or Proposal

When selecting an external evaluator, organizations should request proposals that address several key elements.

A strong proposal should include:

- A clear understanding of the program and evaluation context

- A proposed evaluation design and analytic approach

- A workplan outlining milestones and responsibilities

- A staffing plan identifying who will conduct the work

- A detailed budget and cost justification

- Examples of prior evaluation work or reports

Selecting an evaluator should feel less like hiring a vendor and more like choosing a partner.

External Evaluation Should Reduce Burden, Not Create It

External evaluation can be one of the most effective ways to generate credible evidence about program impact. Independent analysis strengthens trust and helps leaders make informed decisions.

The purpose of evaluation is not simply to produce a report. It is to help leaders understand what is working, what needs improvement, and where resources will make the greatest difference for students.

For districts, edtech companies, and nonprofit education organizations, the goal is not evaluation for its own sake. It is clear, credible evidence that supports better decisions without creating unnecessary burden for internal teams. That is the value of a rapid, independent approach built around real-world timelines and existing student data.